Trust the Data, Not Your Imagination

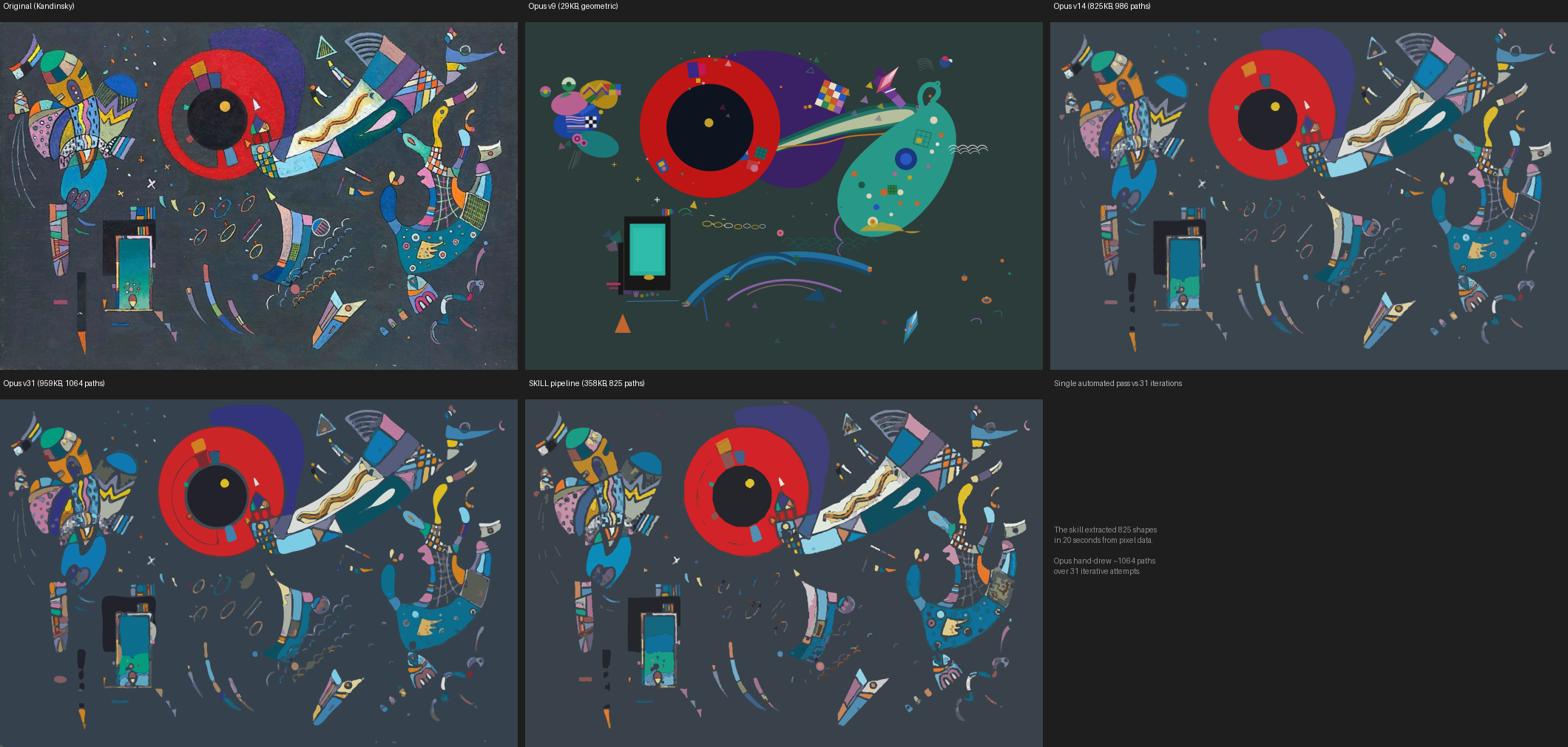

Claude Opus can look at an image, understand what it sees, and write SVG code to reproduce it. Over 31 painstaking iterations on Kandinsky's Around the Circle (1940), it produced increasingly faithful reproductions — hand-drawing each path from visual interpretation, adjusting colors by eye, layering shapes based on artistic judgment.

The result after all that work: 1,064 paths, 959KB, and a recognizable reproduction.

Then I ran a 20-second automated pipeline on the same image and got equivalent fidelity at half the file size.

The Experiment

The starting point was three SVG snapshots from a marathon Opus session — versions 9, 14, and 31 of 31 attempts to reproduce Kandinsky's painting. Version 9 was the earliest approach: clean geometric primitives (circles, rectangles, polygons) placed by visual interpretation. It looked like a diagram of the painting rather than a reproduction. By version 14, Opus had switched to organic traced paths, and the biomorphic forms finally started to flow. Versions 14 through 31 were incremental refinements within that paradigm.

Watching this progression made the failure mode obvious: Claude's visual interpretation of images is unreliable for precise color matching, shape positioning, and spatial relationships. Each iteration was an LLM guessing at hex values, estimating coordinates, and hoping the shapes would land in the right place. Sometimes they did. Often they didn't. 31 iterations is a lot of correction cycles.

So I built a skill around a different principle: every shape, color, and position must come from computational analysis of the source pixels.

The Pipeline

The pipeline is straightforward computer vision:

- Preprocessing — bilateral filter to remove texture while preserving edges, then gentle Gaussian blur

- Color quantization — K-means clustering (K=28–36) on the pixel color space, reducing millions of colors to a manageable palette

- Background detection — identify background clusters by edge contact (colors that dominate image borders)

- Contour extraction — for each color cluster, extract filled contours via OpenCV, simplify with

approxPolyDP - Z-ordering — painter's algorithm, largest shapes first

- SVG assembly — emit paths with extracted hex colors

No visual interpretation involved. The pipeline doesn't know it's looking at a Kandinsky — it sees pixel clusters and contour boundaries.

Kandinsky: The Scorecard

| v9 | v14 | v31 | Skill | |

|---|---|---|---|---|

| RMSE | 55.2 | 22.3 | 23.3 | 23.5 |

| Mean ΔE | 69.4 | 21.6 | 23.4 | 21.5 |

| Correlation | 0.12 | 0.86 | 0.85 | 0.84 |

| File size | 29KB | 825KB | 959KB | 358KB |

| Paths | ~300 | 986 | 1,064 | 825 |

| Time | 31 iterations | 20 seconds | ||

The skill's single automated pass matches v14/v31 on fidelity metrics at less than half the file size. Mean color distance is actually the lowest among all SVG versions — because K-means extracted colors from actual pixels instead of Claude eyeballing hex values.

Here's the skill's Kandinsky reproduction as an SVG you can zoom into:

For comparison, here's Opus's early geometric attempt — version 9 — to illustrate the gap that visual interpretation has to cross:

The Sfumato Stress Test

Kandinsky's geometric abstraction is arguably the easy case for flat-fill SVG — the painting already consists of discrete color regions. The real test: Leonardo's Mona Lisa, where the entire technique is built on invisible tonal gradients.

The numbers are counterintuitively good: RMSE 19.0, correlation 0.93 — better than Kandinsky. That's because the Mona Lisa has large continuous tonal regions; even quantized into 36 colors, the big shapes occupy roughly the right spatial areas and the pixel error stays low.

But the numbers lie about perceptual quality. Look at the face. Leonardo's sfumato — the invisible transitions between light and shadow at the corners of the mouth, the modeling around the eyes — gets carved into discrete zones with hard edges. The famous smile, which exists entirely in the gradient between light and shadow, becomes a harder line. The result has a woodcut quality that's immediately visible even though the metrics say it's close.

What This Shows

The interesting finding isn't that computer vision beats visual interpretation — that's expected. It's the specific failure modes on each side.

Visual interpretation fails at precision. When Claude hand-draws SVG from looking at an image, it gets the gestalt right but the details wrong. Colors drift. Shapes land in approximately the right place. Each iteration fixes some errors and introduces others. 31 iterations is a lot of human-in-the-loop correction for a result that an automated pipeline matches in seconds.

Automated extraction fails at semantics. The pipeline doesn't know that the red circle should be perfectly round, or that the smile should be a smooth transition rather than a polygon boundary. It faithfully reproduces whatever the contour extraction finds — including artifacts from the K-means boundaries. It can't make the judgment calls that would push quality beyond what the pixel data directly provides.

Both hit the same ceiling. The quantized image (RMSE 9.9 for Kandinsky, 11.8 for Mona Lisa) shows there's still a significant gap between what the pixel data knows and what contour extraction can represent. That gap is texture — the micro-variation in brushwork, the granular color transitions, the material quality of paint on canvas. Flat-fill SVG polygons can't capture it without fundamentally different techniques: SVG filters, gradient meshes, noise textures, or vastly more overlapping semi-transparent paths.

The Skill

The image-to-svg skill (v1.0.0) is available in the claude-skills repo. It's designed for Claude.ai's containerized compute environment — opencv-python-headless, scikit-image, scipy, and librsvg2-bin for rendering verification.

The core principle that emerged from watching 31 iterations fail in instructive ways: trust the data, not your imagination. Claude's visual interpretation is unreliable for precise spatial reasoning. Every shape, color, and position should come from computational analysis of source pixels. The skill codifies this into a pipeline with explicit anti-patterns — never hand-draw shapes, never claim a fix works without rendering, never boost saturation globally.

For photographic or painterly images, the current pipeline hits the flat-fill ceiling hard. A v2 could explore higher K values (48–64), finer polygon approximation, hierarchical contour extraction for nested shapes, and optional SVG filters to re-soften skin tones. But for graphic art, illustrations, and geometric compositions, a single pass already matches what iterative visual interpretation achieves — at a fraction of the cost.

All reproductions generated by Claude using OpenCV and K-means clustering. Kandinsky's Around the Circle (1940) and the Mona Lisa are in the public domain. The image-to-svg skill is open source.